k3sのクイックスタートをCentOS7で試す

本記事でやること

- Rancher社が公開しているクイックスタートを試す。

- VMWareFusion上のCentOS7上でk3sのserver/agentを構築。

- nginx podを起動させ外部公開する。

- k3sをアンインストールする。

参考サイト

- k3sのgithubレポジトリ github.com

モチベーション

- kubernetesを自分の周りに教える時に、環境を用意するのが面倒だったため

- 検証ように軽量なkubernetes環境がほしかったため

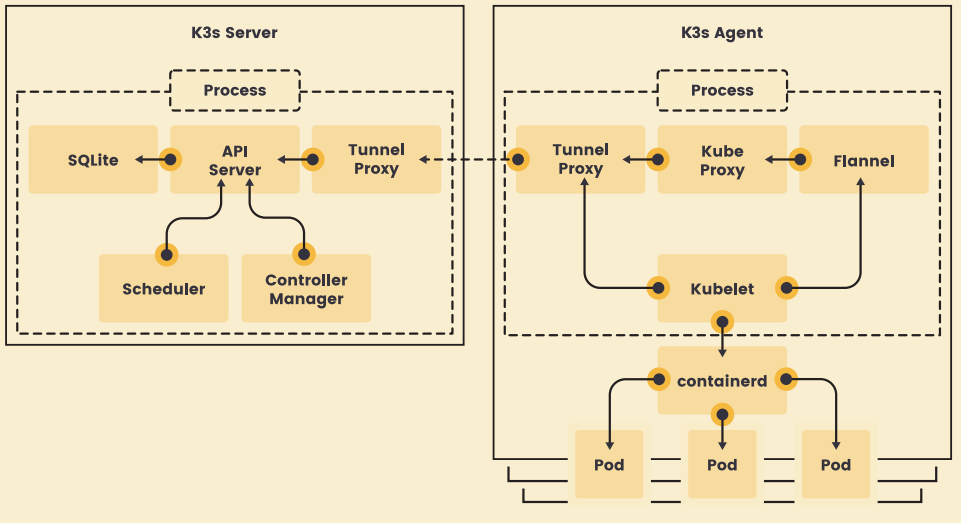

k3sのアーキテクチャ

まずはじめに、k3sのアーキテクチャをさっと見ておこう。

- single processでkubernetesを再現している

- etcdの代りにSQLiteが組み込まれている。

- serverとagentはtunnel proxyでwebsocketで通信される。

- なお、CNIのdefaultとはflannelだがcanal/calicoに変更可能。

- dontainerdもdockerへ変更可能。

- SQliteもMariaDB/MySQL/etcd/ProstgreSQLに変更可能。

試す環境

- macのversion

$ sw_vers ProductName: Mac OS X ProductVersion: 10.15.7 BuildVersion: 19H15

VMware Fusionのversion

CentOS7のversiontと環境イメージ

検証目的のため本気記事はすべてrootユーザで実行した。

- serverのip addressはVMwareFusionからDHCPで設定される。

- server/agent側両方スペックは以下の通り

| 項目 | server | agent |

|---|---|---|

| OS | CentOS Linux release 7.7.1908 (Core) | CentOS Linux release 7.7.1908 (Core) |

| ip address | 192.168.128.23/16 | 192.168.128.26/16 |

| cpu | 1cpu | 1cpu |

| memory | 2GB | 2GB |

| disk | 20GB | 20GB |

クイックスタート

前置きが長かったが実行するコマンドはserver/agentでそれそれ以下のコマンドを実行するだけである。

- server側コマンド

# curl -sfL https://get.k3s.io | sh - ・・・ [INFO] systemd: Starting k3s

- しばらく待って『Starting k3s』が表示されたら、agent参加用のTOKENを取得する。

# cat /var/lib/rancher/k3s/server/node-token K107276dbacffd6b33672da6b084aed88df8f97e91fbb55789bc94da51a9868a342::server:1ba7e70e48330ce8f268d98fcbb6dc44

- agent側コマンド serverのURLとTOKENを指定して実行する

# curl -sfL https://get.k3s.io | K3S_URL=https://192.168.128.23:6443 \ K3S_TOKEN=K107276dbacffd6b33672da6b084aed88df8f97e91fbb55789bc94da51a9868a342::server:1ba7e70e48330ce8f268d98fcbb6dc44 \ sh - ・・・ [INFO] systemd: Starting k3s-agent

しばらく待って『k3s-agent』が表示されたら完了。

それでは状態を見ていこうと思う。

インストール後の確認

- server側で実施

問題なくkubernetesが検証できる環境が整っている。

# kubectl cluster-info Kubernetes master is running at https://127.0.0.1:6443 CoreDNS is running at https://127.0.0.1:6443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy Metrics-server is running at https://127.0.0.1:6443/api/v1/namespaces/kube-system/services/https:metrics-server:/proxy To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. # kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME k3s-server Ready master 81m v1.19.3+k3s3 192.168.128.23 <none> CentOS Linux 7 (Core) 3.10.0-1062.1.2.el7.x86_64 containerd://1.4.1-k3s1 k3s-agent Ready <none> 67m v1.19.3+k3s3 192.168.128.26 <none> CentOS Linux 7 (Core) 3.10.0-1062.1.2.el7.x86_64 containerd://1.4.1-k3s1 # kubectl get all --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system pod/metrics-server-7b4f8b595-jgnql 1/1 Running 0 81m kube-system pod/local-path-provisioner-7ff9579c6-vxf5p 1/1 Running 0 81m kube-system pod/coredns-66c464876b-9tjjs 1/1 Running 0 81m kube-system pod/helm-install-traefik-x92fq 0/1 Completed 0 81m kube-system pod/svclb-traefik-wtm4x 2/2 Running 0 80m kube-system pod/traefik-5dd496474-64zdf 1/1 Running 0 80m kube-system pod/svclb-traefik-7ztm9 2/2 Running 0 67m NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default service/kubernetes ClusterIP 10.43.0.1 <none> 443/TCP 81m kube-system service/kube-dns ClusterIP 10.43.0.10 <none> 53/UDP,53/TCP,9153/TCP 81m kube-system service/metrics-server ClusterIP 10.43.90.155 <none> 443/TCP 81m kube-system service/traefik-prometheus ClusterIP 10.43.82.77 <none> 9100/TCP 80m kube-system service/traefik LoadBalancer 10.43.143.86 192.168.128.23 80:30876/TCP,443:31599/TCP 80m NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE kube-system daemonset.apps/svclb-traefik 2 2 2 2 2 <none> 80m NAMESPACE NAME READY UP-TO-DATE AVAILABLE AGE kube-system deployment.apps/metrics-server 1/1 1 1 81m kube-system deployment.apps/local-path-provisioner 1/1 1 1 81m kube-system deployment.apps/coredns 1/1 1 1 81m kube-system deployment.apps/traefik 1/1 1 1 80m NAMESPACE NAME DESIRED CURRENT READY AGE kube-system replicaset.apps/metrics-server-7b4f8b595 1 1 1 81m kube-system replicaset.apps/local-path-provisioner-7ff9579c6 1 1 1 81m kube-system replicaset.apps/coredns-66c464876b 1 1 1 81m kube-system replicaset.apps/traefik-5dd496474 1 1 1 80m NAMESPACE NAME COMPLETIONS DURATION AGE kube-system job.batch/helm-install-traefik 1/1 62s 81m

簡単なnginx podを起動させる

- server側で実施

# kubectl run nginx --image=nginx:1.19 pod/nginx created kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES nginx 1/1 Running 0 3m19s 10.42.1.3 k3s-agent <none> <none> [root@k3s-server ~]#

agert側でpodが稼働していますね。

もう少し複雑にして * DeploymentでReplicsSetで複数podを起動し、NodePortで外部へ公開してみます。

yamlの定義は以下のようにします。

apiVersion: apps/v1 kind: Deployment metadata: name: sample-deployment spec: replicas: 3 selector: matchLabels: app: sample-app template: metadata: labels: app: sample-app spec: containers: - name: nginx-container image: nginx:1.19 --- apiVersion: v1 kind: Service metadata: name: sample-nodeport spec: type: NodePort ports: - name: "http-port" protocol: "TCP" port: 8080 targetPort: 80 nodePort: 30080 selector: app: sample-app

server上で適応する

# cat <<EOF > sample-app.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: sample-deployment

spec:

replicas: 3

selector:

matchLabels:

app: sample-app

template:

metadata:

labels:

app: sample-app

spec:

containers:

- name: nginx-container

image: nginx:1.19

---

apiVersion: v1

kind: Service

metadata:

name: sample-nodeport

spec:

type: NodePort

ports:

- name: "http-port"

protocol: "TCP"

port: 8080

targetPort: 80

nodePort: 30080

selector:

app: sample-app

EOF

# kubectl create -f sample-app.yaml

deployment.apps/sample-deployment created

service/sample-nodeport created

# kubectl get all -n default

NAME READY STATUS RESTARTS AGE

pod/nginx 1/1 Running 0 11m

pod/sample-deployment-85cc985d9c-zff9p 1/1 Running 0 44s

pod/sample-deployment-85cc985d9c-xmmth 1/1 Running 0 44s

pod/sample-deployment-85cc985d9c-44bw2 1/1 Running 0 44s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.43.0.1 <none> 443/TCP 125m

service/sample-nodeport NodePort 10.43.166.238 <none> 8080:30080/TCP 44s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/sample-deployment 3/3 3 3 44s

NAME DESIRED CURRENT READY AGE

replicaset.apps/sample-deployment-85cc985d9c 3 3 3 44s

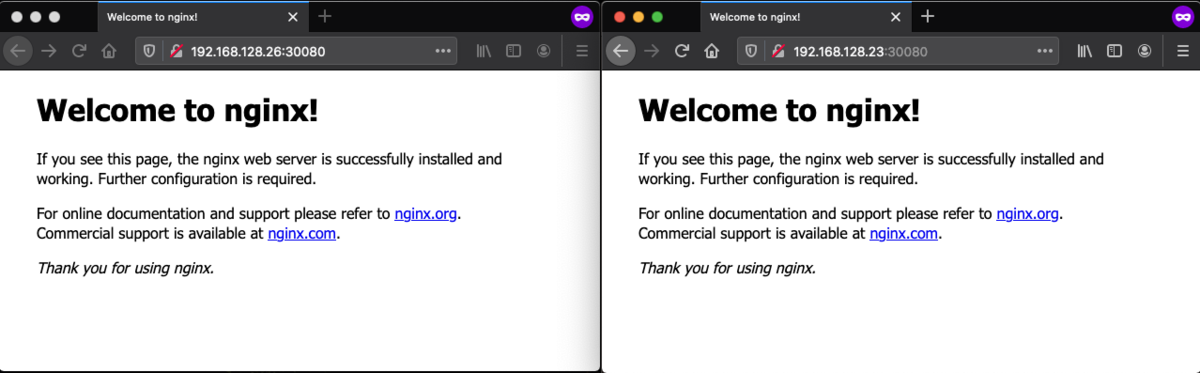

NodePortなのでserver/agentのip addressでアクセスすると見れます。

アンインストール

- uninstall専用のscriptが用意いるので実行する。

- がその前に念の為、delete nodeしてから実行することにします。

- server側で実施

# kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME k3s-agent Ready <none> 172m v1.19.3+k3s3 192.168.128.26 <none> CentOS Linux 7 (Core) 3.10.0-1062.1.2.el7.x86_64 containerd://1.4.1-k3s1 k3s-server Ready master 3h6m v1.19.3+k3s3 192.168.128.23 <none> CentOS Linux 7 (Core) 3.10.0-1062.1.2.el7.x86_64 containerd://1.4.1-k3s1 # kubectl delete node k3s-agent node "k3s-agent" deleted # kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME k3s-server Ready master 3h6m v1.19.3+k3s3 192.168.128.23 <none> CentOS Linux 7 (Core) 3.10.0-1062.1.2.el7.x86_64 containerd://1.4.1-k3s1

この状態でuninstall.shを実行します。

少々長いですがコマンド結果も何をしているのかがわかるので省略せずそのままにしておきます。

* agent側

# sh /usr/local/bin/k3s-agent-uninstall.sh

++ id -u

+ '[' 0 -eq 0 ']'

+ /usr/local/bin/k3s-killall.sh

+ for service in '/etc/systemd/system/k3s*.service'

+ '[' -s /etc/systemd/system/k3s-agent.service ']'

++ basename /etc/systemd/system/k3s-agent.service

+ systemctl stop k3s-agent.service

+ for service in '/etc/init.d/k3s*'

+ '[' -x '/etc/init.d/k3s*' ']'

+ killtree

+ kill -9

+ do_unmount /run/k3s

+ awk -v path=/run/k3s '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ sort -r

+ xargs -r -t -n 1 umount

+ do_unmount /var/lib/rancher/k3s

+ sort -r

+ xargs -r -t -n 1 umount

+ awk -v path=/var/lib/rancher/k3s '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ do_unmount /var/lib/kubelet/pods

+ sort -r

+ xargs -r -t -n 1 umount

+ awk -v path=/var/lib/kubelet/pods '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ do_unmount /run/netns/cni-

+ sort -r

+ xargs -r -t -n 1 umount

+ awk -v path=/run/netns/cni- '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ read ignore iface ignore

+ ip link show

+ grep 'master cni0'

+ ip link delete cni0

+ ip link delete flannel.1

+ rm -rf /var/lib/cni/

+ grep -v KUBE-

+ grep -v CNI-

+ iptables-restore

+ iptables-save

+ which systemctl

/usr/bin/systemctl

+ systemctl disable k3s-agent

Removed symlink /etc/systemd/system/multi-user.target.wants/k3s-agent.service.

+ systemctl reset-failed k3s-agent

+ systemctl daemon-reload

+ which rc-update

which: no rc-update in (/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/root/bin)

+ rm -f /etc/systemd/system/k3s-agent.service

+ rm -f /etc/systemd/system/k3s-agent.service.env

+ trap remove_uninstall EXIT

+ for cmd in kubectl crictl ctr

+ '[' -L /usr/local/bin/kubectl ']'

+ rm -f /usr/local/bin/kubectl

+ for cmd in kubectl crictl ctr

+ '[' -L /usr/local/bin/crictl ']'

+ rm -f /usr/local/bin/crictl

+ for cmd in kubectl crictl ctr

+ '[' -L /usr/local/bin/ctr ']'

+ rm -f /usr/local/bin/ctr

+ rm -rf /etc/rancher/k3s

+ rm -rf /run/k3s

+ rm -rf /run/flannel

+ rm -rf /var/lib/rancher/k3s

+ rm -rf /var/lib/kubelet

+ rm -f /usr/local/bin/k3s

+ rm -f /usr/local/bin/k3s-killall.sh

+ remove_uninstall

+ rm -f /usr/local/bin/k3s-agent-uninstall.sh

# systemctl status k3s

Unit k3s.service could not be found.

- server側

# sh /usr/local/bin/k3s-uninstall.sh

++ id -u

+ '[' 0 -eq 0 ']'

+ /usr/local/bin/k3s-killall.sh

+ for service in '/etc/systemd/system/k3s*.service'

+ '[' -s /etc/systemd/system/k3s.service ']'

++ basename /etc/systemd/system/k3s.service

+ systemctl stop k3s.service

+ for service in '/etc/init.d/k3s*'

+ '[' -x '/etc/init.d/k3s*' ']'

+ killtree 1755 1817 1837 2537 2599 14783 22102 22103 22204 22425

+ kill -9 1755 1782 2028 1817 1897 1952 1837 1907 2070 2537 2555 2666 2709 2599 2617 2786 14783 14803 14953 14998 22102 22154 22296 22375 22103 22139 22306 22374 22204 22222 22544 22425 22447 22791

+ do_unmount /run/k3s

+ xargs -r -t -n 1 umount

+ awk -v path=/run/k3s '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ sort -r

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/f3c7d09a32ad5c8cfb3ba7c6eff2e1f1e4050513dfd337003800fc52bd12e556/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/e5c4bf68e51e2d3a96f214414e297df73f2caaf4c073a9ca55181e4c2d273513/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/be5b76cc8349acf01fcaccd4e644ad276602316314de911c0a1f9e3889ff61bf/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/a86e3ea2d0f623a3d8c82f9f5a616c18006ecd86c9f7d70209ef370454d4c62b/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/a13b1be939373049e5f44e342a26bee583b78ca3d16dac5c5ac0a25d07136c32/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/9824ef29d876d45cb62fdcb22bc06096a4ffb1cf8a5f7b2b76bb828a18d6ed8b/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/969f4e538857b36527464e403e8fd10bc4ec56148d6596f361f101c984886b62/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/87653e291576f28db869dc8f1b490606c176b3bef158626af3074ac4851e33bc/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/78d81ea5e5cfadc08735068e966bbc849e6aa401a64421f3e5837327c302077e/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/69ccc8d2185c4049903a730eee4c55f920ac9c0eb4ea58e29b5c0ce3bce171fd/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/4fe5d8afd6399e1154899fe0426d40c0705511164ed7ed9c2eaa62e754f7fd6a/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/4817814ae99b99b3245c684df553337a254276a6d1a67a6e663b3cb44e698adb/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/43018d27267e76675ccfccb5f2bff44cd958cdc1b395bafd42dd6a85e302b383/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/416f18f95852af17b264bc2edad06e81bc31080d32eb82e93eabd95531b23349/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/3c8ce42af2d1a12043e7a68b915527077279035f4a89a0014ae465fd17e10847/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/2fc4283627fa75a98c0bc69d2b361bc7ee0bb4ac9a1a5ac46b6baefbd7476cda/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/2ad25f4eb64a1e8a90ef742ae18ee1b00c3f553af249782c14854d4c8f6bfae0/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/23c1b6c10d4d712e5c0ae05b29302293cf17864f559cf2fea92b8228d771f974/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/1f560c4ba2ae8180c7e6d33c259b8a1eb80a0edbc4928a29cb1d4a6b8c228039/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/0e50784f76761f20b65825b85de24aaea7c8c9aec0ff9414e5d0e0ffccd3c75d/rootfs

umount /run/k3s/containerd/io.containerd.runtime.v2.task/k8s.io/0135f4349da8468c78c2b49da084e95c061a764a0829bf8e733edcf0d998ded5/rootfs

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/f3c7d09a32ad5c8cfb3ba7c6eff2e1f1e4050513dfd337003800fc52bd12e556/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/e5c4bf68e51e2d3a96f214414e297df73f2caaf4c073a9ca55181e4c2d273513/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/be5b76cc8349acf01fcaccd4e644ad276602316314de911c0a1f9e3889ff61bf/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/a13b1be939373049e5f44e342a26bee583b78ca3d16dac5c5ac0a25d07136c32/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/969f4e538857b36527464e403e8fd10bc4ec56148d6596f361f101c984886b62/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/87653e291576f28db869dc8f1b490606c176b3bef158626af3074ac4851e33bc/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/78d81ea5e5cfadc08735068e966bbc849e6aa401a64421f3e5837327c302077e/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/4817814ae99b99b3245c684df553337a254276a6d1a67a6e663b3cb44e698adb/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/23c1b6c10d4d712e5c0ae05b29302293cf17864f559cf2fea92b8228d771f974/shm

umount /run/k3s/containerd/io.containerd.grpc.v1.cri/sandboxes/1f560c4ba2ae8180c7e6d33c259b8a1eb80a0edbc4928a29cb1d4a6b8c228039/shm

+ do_unmount /var/lib/rancher/k3s

+ xargs -r -t -n 1 umount

+ awk -v path=/var/lib/rancher/k3s '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ sort -r

+ do_unmount /var/lib/kubelet/pods

+ sort -r

+ xargs -r -t -n 1 umount

+ awk -v path=/var/lib/kubelet/pods '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

umount /var/lib/kubelet/pods/f8e18350-11ba-441d-afed-db25934f906e/volumes/kubernetes.io~secret/coredns-token-b9ffd

umount /var/lib/kubelet/pods/b92fc364-7ba1-44d2-b82a-a1f6c0e64bbe/volumes/kubernetes.io~secret/default-token-79pl6

umount /var/lib/kubelet/pods/8c656086-8f4e-4abc-bfe9-9634b411cf73/volumes/kubernetes.io~secret/default-token-79pl6

umount /var/lib/kubelet/pods/8c2c9c75-8a2c-443b-a8e0-7664855087b8/volumes/kubernetes.io~secret/default-token-m2jcp

umount /var/lib/kubelet/pods/4206b991-ff8f-40df-ab59-4793dc29a351/volumes/kubernetes.io~secret/kubernetes-dashboard-token-sw429

umount /var/lib/kubelet/pods/340715e3-b520-4527-b00a-4ca397373a45/volumes/kubernetes.io~secret/traefik-token-56jkz

umount /var/lib/kubelet/pods/340715e3-b520-4527-b00a-4ca397373a45/volumes/kubernetes.io~secret/ssl

umount /var/lib/kubelet/pods/1db035d9-a420-468e-9425-9f2c280494e3/volumes/kubernetes.io~secret/kubernetes-dashboard-token-sw429

umount /var/lib/kubelet/pods/1db035d9-a420-468e-9425-9f2c280494e3/volumes/kubernetes.io~secret/kubernetes-dashboard-certs

umount /var/lib/kubelet/pods/1d5efe23-b957-4b69-8fd1-6b773332ca12/volumes/kubernetes.io~secret/local-path-provisioner-service-account-token-vrfsd

umount /var/lib/kubelet/pods/1ccfdee1-81d7-4e95-99c8-9ab0eead01f5/volumes/kubernetes.io~secret/metrics-server-token-jhj89

umount /var/lib/kubelet/pods/0a0bcfb6-61f0-4a33-9480-40a5a75c147d/volumes/kubernetes.io~secret/default-token-79pl6

+ do_unmount /run/netns/cni-

+ xargs -r -t -n 1 umount

+ awk -v path=/run/netns/cni- '$2 ~ ("^" path) { print $2 }' /proc/self/mounts

+ sort -r

umount /run/netns/cni-c2f82672-1b96-e506-ac5b-1f07f25800c2

umount /run/netns/cni-c2ae6350-5039-18f5-798d-be09a81a04bf

umount /run/netns/cni-b85f6a5d-5612-9a93-3fc1-23a91b90c8be

umount /run/netns/cni-a9b294a8-f0ae-09c4-e895-c8f0056468fe

umount /run/netns/cni-8ebfe16d-21fd-222b-5ef1-3af9c784c636

umount /run/netns/cni-8a29a30a-bfcd-d92f-c99f-d71c9ad49d83

umount /run/netns/cni-7b94dacb-da32-0005-9108-1e2c2de9b491

umount /run/netns/cni-2d6ca6ae-b776-e142-a137-70f7fffa8f9f

umount /run/netns/cni-153e782e-7177-ad88-e329-7a0733806f46

umount /run/netns/cni-06406680-9ae5-f815-95cb-9f7cf4cbd6e4

+ read ignore iface ignore

+ ip link show

+ grep 'master cni0'

+ iface=veth9e8235f0

+ '[' -z veth9e8235f0 ']'

+ ip link delete veth9e8235f0

+ read ignore iface ignore

+ iface=vethda58bd85

+ '[' -z vethda58bd85 ']'

+ ip link delete vethda58bd85

+ read ignore iface ignore

+ iface=veth425cb97f

+ '[' -z veth425cb97f ']'

+ ip link delete veth425cb97f

+ read ignore iface ignore

+ iface=vethba67606f

+ '[' -z vethba67606f ']'

+ ip link delete vethba67606f

Cannot find device "vethba67606f"

+ read ignore iface ignore

+ iface=veth33673995

+ '[' -z veth33673995 ']'

+ ip link delete veth33673995

Cannot find device "veth33673995"

+ read ignore iface ignore

+ ip link delete cni0

+ ip link delete flannel.1

+ rm -rf /var/lib/cni/

+ grep -v KUBE-

+ grep -v CNI-

+ iptables-restore

+ iptables-save

+ which systemctl

/usr/bin/systemctl

+ systemctl disable k3s

Removed symlink /etc/systemd/system/multi-user.target.wants/k3s.service.

+ systemctl reset-failed k3s

+ systemctl daemon-reload

+ which rc-update

which: no rc-update in (/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/root/bin)

+ rm -f /etc/systemd/system/k3s.service

+ rm -f /etc/systemd/system/k3s.service.env

+ trap remove_uninstall EXIT

+ for cmd in kubectl crictl ctr

+ '[' -L /usr/local/bin/kubectl ']'

+ rm -f /usr/local/bin/kubectl

+ for cmd in kubectl crictl ctr

+ '[' -L /usr/local/bin/crictl ']'

+ rm -f /usr/local/bin/crictl

+ for cmd in kubectl crictl ctr

+ '[' -L /usr/local/bin/ctr ']'

+ rm -f /usr/local/bin/ctr

+ rm -rf /etc/rancher/k3s

+ rm -rf /run/k3s

+ rm -rf /run/flannel

+ rm -rf /var/lib/rancher/k3s

+ rm -rf /var/lib/kubelet

+ rm -f /usr/local/bin/k3s

+ rm -f /usr/local/bin/k3s-killall.sh

+ remove_uninstall

+ rm -f /usr/local/bin/k3s-uninstall.sh

# systemctl status k3s

Unit k3s.service could not be found.

出力結果をみると結構きれいに消してくれました。

次回は軽量なalpine Linux上のインストールにして、

CNIもCanalに変更してkubernetesの検証環境を作ってみたいと思います。